AI False Positive Filtering¶

Triage time is the silent cost of any scanner. Every finding a security engineer has to read, reproduce, and dismiss is engineering time that doesn't ship a fix. AI False Positive Filtering uses an LLM-based reviewer to read each finding the way a senior security engineer would, and auto-resolves the ones that don't hold up: status flips to False Positive, with a written reasoning attached, before the issue ever lands in your queue.

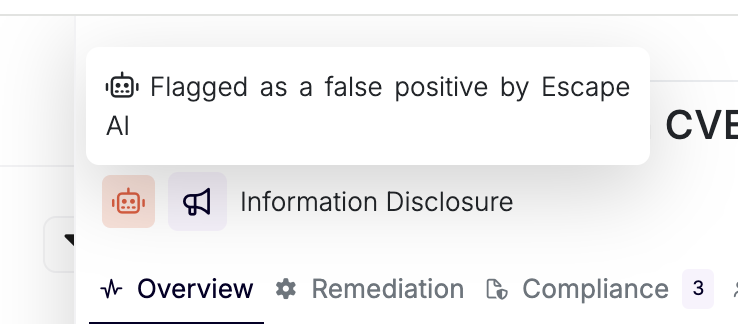

The red robot chip marks issues Escape AI auto-resolved as false positives.

The red robot chip marks issues Escape AI auto-resolved as false positives.

What It Does¶

For every new issue produced by a scan, Escape runs a dedicated AI reviewer that:

- Reads the full finding context: name, severity, category, target, evidence attachments, and any rule-specific review instructions.

- Decides whether the evidence actually supports the claim, using an explicit decision matrix:

- Technically incorrect or evidence is wrong: False Positive.

- Technically correct and exploitable in context: True Positive.

- Technically correct but not exploitable in context: valid Informational or Low finding, not a false positive.

- Writes back three fields on the issue: a boolean verdict, a long-form

reasoningrendered as markdown in the issue overview, and a shortsummary. - Auto-sets the issue status to

FALSE_POSITIVEwhen the verdict is positive. The issue lands directly in the False Positive bucket: nobody on your team has to triage it first.

The reviewer is intentionally conservative. It only flips an issue when the context contradicts the evidence. It never marks a finding as a false positive just because the target is a known vulnerable, demo, or test environment, and it never demotes a Low or Informational finding to false positive: those stay as valid non-critical findings.

Why It Saves You Time¶

In a typical mid-to-large scan, a meaningful share of issues reduces to "yes the scanner saw the pattern, but in this context it's not actually exploitable". Without filtering, every one of those still costs:

- A security engineer reading the evidence.

- A reproduction attempt, sometimes against staging.

- A status update and a comment explaining why it was dismissed.

With filtering on, those issues arrive already marked False Positive, with the reasoning attached. Your team reads the reasoning to confirm, agrees in seconds, and moves on. The triage queue shrinks to issues that actually need a decision.

For customers running continuous scans across dozens of assets, this turns multi-hour weekly triage sessions into focused reviews of the True Positive backlog.

Where You See It in the Product¶

- Issue side panel: the red robot chip appears in the header next to the severity badge whenever the AI flagged the finding and the status is

FALSE_POSITIVE. Hovering it shows "Flagged as a false positive by Escape AI". - Issue overview: a dedicated AI False Positive Reasoning card renders the model's full markdown explanation, so the engineer reviewing can read the argument before accepting or overriding it.

- Issue list: an AI False Positive filter (True / False) lets you slice the queue, for example to audit a sample of AI-flagged findings before trusting the system.

- Issue row: the same red robot chip shows inline in the issues table.

If you disagree with a verdict, change the status back from FALSE_POSITIVE to OPEN (or any other status). The chip and reasoning stay visible for traceability, but the issue re-enters your active queue.

How Escape Learns from Your False-Positive Feedback¶

Every time your team marks an issue as a false positive (whether you're confirming an AI verdict or overriding the AI to flag a finding the model missed), that signal feeds back into how Escape improves the system over time.

Concretely, your false-positive feedback shapes:

- Reviewer prompts: recurring patterns we see across customer feedback inform the explicit rules baked into the reviewer's instructions (the decision matrix, the "don't demote Low to FP" rule, and similar guardrails came from exactly this kind of signal).

- Detector tuning: when a specific rule produces a high rate of false positives across tenants, the security research team revisits the underlying detector, not just the AI filter.

- Evaluation set: confirmed false positives become regression cases we use to validate that future model updates don't reintroduce noise we already eliminated.

We don't train a per-tenant model on your data, and the reviewer doesn't read other organizations' findings. Improvements ship for everyone through prompt and detector updates, not through cross-tenant model fitting.

The simplest way to help: keep marking issues honestly. Every status change is a vote on whether Escape's reviewer is calibrated for your environment.